Malware Analysis Using Memory Forensics

Malware analysis can be very simple or very complex. The goal of this article is to introduce a process of using free tools that entry-level analysts can use to collect data.

Once malware is found and identified, it is not a question of how it is cleaned, but rather, what it has done. The technology staff can re-image the computer, or run their favorite set of anti-malware tools to clean. But the question remains what the malware has done, what information has been stolen, how will this hurt business. A lot these answers might only be found through malware analysis and forensics.

Microsoft Windows is still the dominant operating system and as such is still targeted most often [1]. This blog will focus on Windows 7 as the victim with a goal to infect and collect data to analyze later. A simple toolkit will be created using free tools to help automate collection.

Malware analysis can be very simple or very complex. The complexity depends on what information the analyst is looking for. The goal of this article is to introduce a process of using free tools that an entry level analyst can use to collect data. It is also important to collect data in a way so a senior analyst can review it, if needed.

Related Work

There are several commercial and free tools to help analyze malware found in memory. Mandiant redline [2] and volatility [3] are a two popular tools to help analyze malware. However, before data can be analyzed, it must first be collected.

It is important to have a standard set of procedures when collecting data [4]. The investigator may only have one chance to collect volatile data. As the research conducted by N. Davis [4] shows, along with several others, collecting data is a very important step. Without proper collection there can be no further analysis.

Below is a list of items that should be considered when collecting data during an incident. Not all the items are always needed to analyze malware.

• Physical memory

• Network connections, open TCP or UDP ports

• NetBIOS

• Currently logged on user / user accounts

• Current executing processes and services

• Scheduled jobs

• Windows registry

• Browser auto-completion data, passwords

• Screen capture

• Chat logs

• Windows SAM files / NTUser.dat files

• System logs

• Installed applications and drives

• Environment variables

• Internet history

Common Malware Analysis Techniques

With malware analysis there are two general approaches, static analysis and dynamics analysis. Another approach is to use malware forensics or live forensics.

Both static and dynamic collection can consist of creating an image copy of the hard disk drive or simply using the malware binary file, if available. Once the analyst has a binary they can perform their analysis in a controlled lab environment. If the analyst has an image copy of the victim’s computer, they can restore that in a lab environment for their analysis. Both static and dynamic analysis are common when examining binaries.

There are several issues with these approaches. One shortcoming with static or dynamic analysis is not having the binary to conduct analysis. The other problem is the need for a controlled lab environment to analyze malware. In some cases this might be required. However, if the analyst can determine malware did not steal data, they can often end the investigation, finish documentation with firm evidence that data was not compromised. Further analysis will need to be completed if malware is suspected of stealing data.

Malware forensics will offer great opportunity to determine if data was or was not stolen. Often times malware will either delete the binary, encrypt itself, or otherwise leave the original malware corrupt. This blog will provide a brief overview of static and dynamic analysis, but will focus on malware forensics afterwards.

A. Static Analysis

Static analysis can be broken into basic and advanced. Basic is used to observe the malware without actually running the executable [6]. Malware can be observed with anti-virus software or intrusion detection system might alert a technician. This is how support staff will most likely confirm infection. For basic static analysis you can use a program like strings, which is found in the sysinternals tools [7]. When a developer writes a program they use code to print, connect to URL, or copies a file to a specific location [8], these are strings. An analyst can use the binary and print the strings. Figure 2 shows a clipped example of what an analyst might see.

Usually, the strings program will provide details about the binary. However, if the output from strings provide little or no details it is most likely obfuscated. Obfuscation is an evasion technique used to hide the program logic, like strings, to prevent analysis [9].

Advanced static analysis requires a person to disassemble the executable [10]. The learning curve for reverse engineering is steep. If the organization’s existing staff know how to reverse engineer software, the binary is still needed. Focusing on malware forensics while utilizing a toolkit will provide a consistent way to collect data with the option to extract the binary.

B. Dynamic Analysis

Dynamic analysis can also be broken into basic and advanced. Basic dynamic analysis requires a person to setup a controlled lab, run the malware, and observe the behavior [11]. Advanced dynamic analysis also requires a lab and the use of a debugger to watch the behavior [12]. Both can be a very time consuming process and require advanced knowledge. The analyst needs to be aware of self-modifying code and packers while performing dynamic analysis. Self-modifying code will modify the code if it suspects analysis is being performed. For example, if an analyst is attempting to debug the code it could change itself. The malware could produce the wrong binary when being disassembled [13]. A packer will obfuscate the code and make it more difficult to analyze malware [14].

C. Malware Forensics

Live forensics is used to collect system information before the infected system is powered down. All random access memory (RAM) is volatile storage. Volatile storage will only maintain its data while the device is powered on [15]. This is one reason why preserving volatile data is important for malware analysis. Once the computer is powered off the volatile information will be lost, along with all the important information. Volatile data might contain keys to decrypt information, the investigator can also extract the binaries to perform static or dynamic analysis.

During the incident response volatile data must be collected first [16]. If a RAM dump is not performed first then the system state might change.

The first responder should have a toolkit and a standard, repeatable process they can use to collect system information. The collection process should occur in a way that leaves the data reviewable by other internal staff or can be sent off to an outside expert. In some cases, further forensic analysis should be completed. Therefore, it is important to collect data in a way that others can review and analyze. The toolkit used should also create a hash of the file for integrity purposes.

STREAMLINE MALWARE ANALYSIS

One of the problems with malware analysis is untrained staff collecting system data. The person should not have to be an advanced forensics expert or malware expert to collect data. Furthermore, when finished collecting data a well-trained system administrator might use their existing knowledge to determine what is and is not normal.

A. Determine the best approach

Malware analysis must begin with collecting system information. System information can be collected on a live system or a system that has been shutdown.

We have determined that traditional collection methods with a system being shutdown may not be the best option. If volatile memory is not collected then the analyst might miss important data. Therefore, the best approach for our goal is to collect system information before the system is shutdown. If advanced static or dynamic analysis needs to be completed later than malware forensics will not hinder that process. If the first responder wanted to be extra thorough they could collect data from the live system first, then image the system with traditional methods.

This will ensure that enough information has been collected in the event further analysis is needed.

The first responder can choose between local or remote data collection. Remotely gathering data might take a long time since some systems contain a large amount of data. The speed would also depend on the network connection. The investigator needs to consider if there are firewalls or other security devices in the path.

Gathering data locally would require storage media large enough to hold the results but would be quicker. This blog will focus on gathering information from a local machine, saving output to an external device.

B. Information that needs to be collected

Knowing what information to collect is important. The following information will be collected by the toolkit:

- Acquire system memory: The toolkit uses Belkasoft RAM Capturer to collect the volatile data [17]. The RAM capturer program is a free tool that saves the volatile data in .mem format. This is important so other programs, like Volatility can analyze the data.

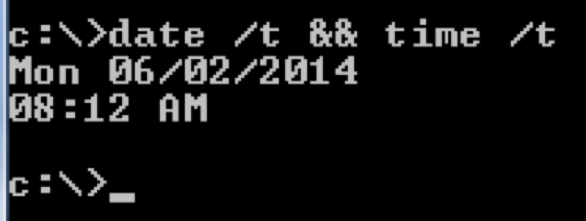

- Document system date and time: The second and last item that is collected is the system date and time. This provides a timeline of events. The command line options date /t and time /t will be used. This information will be saved in the investigation.txt file. Figure 3 shows an example of the date /t and time /t command:

3. System Details:

The third item to be collected will be system details, such as the hostname, uptime, operating system details, and so on. The toolkit will use the sysinternals, psinfo [18].

4. Logged on user:

To help find intruders logged into a system the sysinternals, loggedonsession [19] will be used. This information will be saved to the logonsessions.txt file.

5. Network Information:

The toolkit will need to thoroughly collect network connections and activity. This helps the investigator determine if malware stole data by determining if a process is connecting to a remote host. Information will be saved to the network.txt file. For this the toolkit will use the following command line options or tools:

a. netstat –abo. The “a” switch will display all connection and listening ports. The “b” switch will display the executable responsible for creating the connection. The “o” switch will show the PID of the connection.

b. ipconfig /displaydns. This command will collect the DNS queries made. Collecting this information will be helpful to determine if malware is connecting to a domain name.

c. The sysinternals tcpvcon –a [20] will show all active TCP and UDP connections. Similar to the netstat command. This will help determine if a process is connecting to a local or remote host. Information will be saved to the netconnections.txt file. Further analysis can be completed using the PID of the process.

6. Processes and Threads:

The malware, if running on the system should be found within the processes. Collecting this information will be beneficial for conducting further analysis. The toolkit will use the following command line options or tools:

a. tasklist -V. This will show the name of the process, PID, memory usage, process status, and owns the process.

This information will be saved in the processinfo.txt file.

b. handle –a. This tool found in the sysinternals suite [32] is used to show programs that have a file open. It will be useful to see if malware is accessing objects on the computer. This information will be saved in the processinfo_handles.txt file.

c. listdlls. This is another sysinternals tool [21] used to view the DLLs. This will help determine if malware is injecting DLLs. This information will be saved in the processinfo_dlls.txt file.

Many modern day malware will hide itself from tools, such as tasklist. However, the analyst can use the collected RAM dump and the tool Volatility to find hidden processes.

7. Services and Drivers:

To determine if malware is running as a service the toolkit will use the sysinternals autorunsc [22] tool with the switch –s. This will show auto start services and non-disabled drivers.

8. Scheduled Tasks:

One common tactic used by malware in order to restart itself at system boot is through scheduled tasks. The toolkit will use sysinternals autorunsc [22] tool with the switch –t. This information will be saved to the scheduledtasks.txt file.

9. Network Shares and Mapped Drives:

At times malware will infect the system and spread through network shares. The toolkit will use the following command to show network shares and mapped drives:

a. net share

b. net use

10. Files and Associated Hashes:

This may not collect everything on a system but may collect some common executables, which could be compared for integrity purposes. The toolkit will use the sysinternal autorunsc [22] tool with the switch –f. This information is saved to the FileHashes.txt file.

11. System Events:

Having the system events might be useful to determine what is happening on the system. The toolkit will use sysinternals psloglist [23]. This information will be saved to the events.txt file.

The output information is saved into several text files. The investigation.txt file will show all the text files along with their associated MD5 and SHA1 hash for integrity purposes. Not all of the information is critical to collect and may be redundant. However, the investigator may only have one time to collect, so more information is better than not enough. With the RAM dump the analyst can gather and extract a lot of system details already collected. The additional text files along with the RAM dump will complement each other.

C. Automate the toolkit

In order to streamline the collection and analysis an automated toolkit is essential. The toolkit should be simple enough that an untrained person can plug it in, start the collection, and wait for the collection to finish. Most tools come from Sysinternals [24], which is a set of programs created by Mark Russinovish and Bryce Cogswell. The toolkit will use scripts to go through the collection process in a seamless manor. This will provide a consistent way to collect system information for each incident response case. The scripts will also help prevent human errors. Furthermore, there will be additional scripts available to analyze the .mem file with volatility.

CASE STUDY: STREAMLINING THE COLLECTION AND ANALYSIS

Environment

A lab was setup to demonstrate the toolkit. The lab consists of:

- Virtual environment using VMWare Workstation.

- Isolated network; using FakeNet [25] to simulate a live network.

- Windows 7 Professional 32 bit operating system.

- 2 GB RAM installed

- Sample malware

Collection Process

The steps are as follows:

- Connect the USB drive containing the toolkit.

- Double click the collect.bat file.

- Close the RAM dump window when finished.

- Wait for the finished prompt. Note: this process can take a few minutes to a few hours depending on the amount of data being collected. It is important to wait for the finished prompt.

Analyze The Results

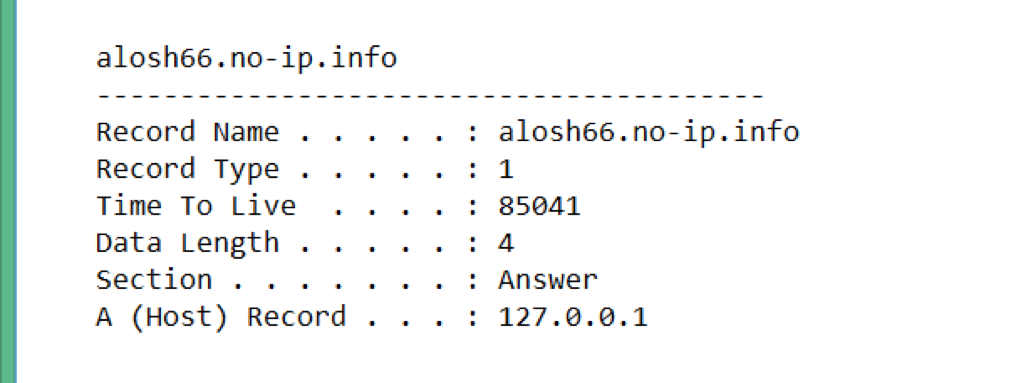

Output from the DNS queries show that malware made a DNS query to alosh66.no-ip.info as shown in Figure 5.

Note: The A host points to 127.0.0.1 since within the lab FakeNet is answering the DNS queries. If this was a production system and connected to the Internet, the actual public IP would show. The malware was indeed connecting to a public IP.

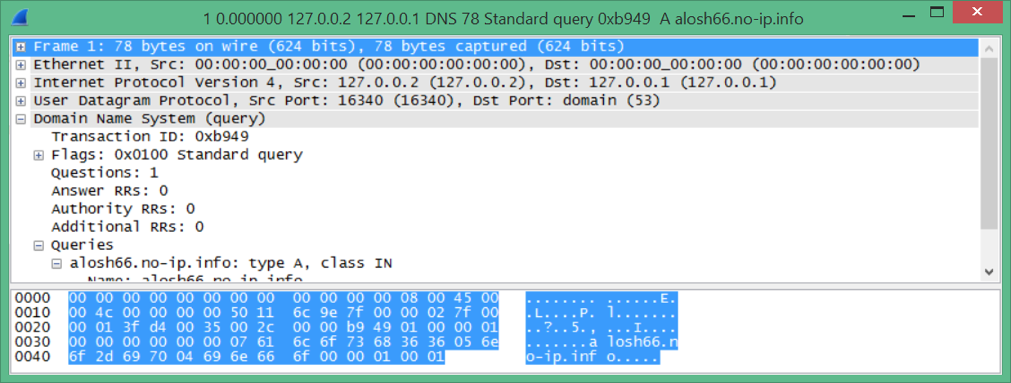

However, it is unclear if information was being transferred. Using a tool called bulk extractor [26], the memory dump can be scanned and a .pcap file extracted. The .pcap file can be opened in a program, such as Wireshark [27].

Using Wireshark the analyst can determine if data was stolen, as shown in figure 6.

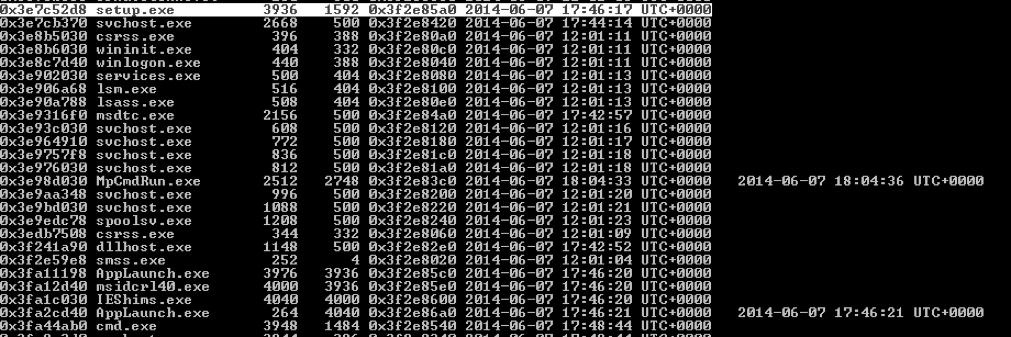

The analyst can use volatility to locate hidden processes, see what processes are being spawned, or possibly extract the executables. Figure 7 shows a clipped screenshot with output from the volatility command psscan.

This example demonstrates how the analyst can review what other processes were spawned from the original malware. In this case the setup.exe spawned several other processes, including msidcr140.exe with a PID of 4000, IEShims.exe with a PID 4040. This is useful information to know in case the analyst found a process connected to an outside IP address. The analyst can determine if the process is associated with malware or not.

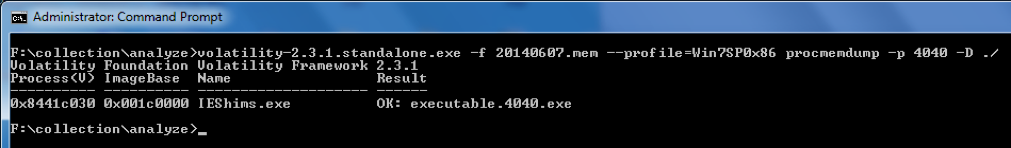

Figure 8 shows an example of extracting an executable out of the RAM dump using the volatility procmemdump.

Conclusion

Analyzing malware first starts with collecting enough data to analyze. As mentioned earlier, the collection process is a critical step. Having a standard process in place will provide consistent results. The automated toolkit provides an easy way for field technicians to collect data. The toolkit automates several operations that would otherwise be prone to human error.

In the sample case the collection process took about two hours to complete. Not all the information was needed to determine if malware stole data or spread across the local area network.

This research was conducted a couple years back but is still relevant. The toolkit created during this research can be found here:

https://github.com/rasinfosec/memory_collector.

References

[1] Secunia, Secunia Vulnerability Report 2014, http://secunia.com/?action=fetch&filename=secunia_vulnerability_review_2014.pdf

[2] Mandiant, Mandiant Redline, http://www.mandiant.com

[3] Volatility, code.google.com/p/volatility

[4] N. Davis, Live Memory Acquisition for Windows Operating Systems: Tools and Techniques for Analysis.

[5] M. Sikorski and A. Hong, Malware Analysis Primer, Practical Malware Analysis, 1st ed. No Starch Press, 2012, pp. Kindle Location 1127

[6] M. Sikorski and A. Hong, Malware Analysis Primer, Practical Malware Analysis, 1st ed. No Starch Press, 2012, pp. Kindle Location 1154

[7] Microsoft, Strings, http://technet.microsoft.com/en-us/sysinternals/bb897439.aspx

[8] M. Sikorski and A. Hong, Basic Static Techniques, Practical Malware Analysis, 1st ed. No Starch Press, 2012, pp. Kindle Location 1296

[9] A. Kumar, Implementation of a Windows Tool to Conduct Live Forensics Aquistion in Windows Systems, 2012

[10] M. Sikorski and A. Hong, Malware Analysis Primer, Practical Malware Analysis, 1st ed. No Starch Press, 2012, pp. Kindle Location 1173

[11] M. Sikorski and A. Hong, Malware Analysis Primer, Practical Malware Analysis, 1st ed. No Starch Press, 2012, pp. Kindle Location 1155

[12] M. Brand, Forensic Analysis Avoidance Techiques of Malware, 2007.

[13] M. Sikorski and A. Hong, Malware Analysis Primer, Practical Malware Analysis, 1st ed. No Starch Press, 2012, pp. Kindle Location 1173

[14] M. Egele et al, A Survey on Automated Dynamic Malware Analysis Techniques and Tools, https://iseclab.org/papers/malware_survey.pdf, pp. 19

[15] "vol," http://whatis.techtarget.com/definition/volatile-memory.

[16] C. Malin et al, Memory Forensics, Malware Forensics Field Guide for Windows Systems, Syngress, 2012, pp Kindle Location 704

[17] Belkasoft Ram Capturer, http://forensic.belkasoft.com/en/ram-capturer

[18] Microsoft, Sysinternals, psinfo, http://technet.microsoft.com/en-us/sysinternals/bb897550

[19] Microsoft, Sysinternals, loggedonsessions, http://technet.microsoft.com/en-us/sysinternals/bb896769

[20] Microsoft, Sysinternals, handle, http://technet.microsoft.com/en-us/sysinternals/bb896655

[21] Microsoft, Sysinternals, listdlls, http://technet.microsoft.com/en-us/sysinternals/bb896656

[22] Microsoft, Sysinternals, autorunsc, http://technet.microsoft.com/en-us/sysinternals/bb963902

[23] Microsoft, Sysinternals, psloglist, http://technet.microsoft.com/en-us/sysinternals/bb897544

[24] Microsoft, Sysinternals, http://msdn.microsoft.com/en-us/library/bb545021.aspx

[25] Practical Malware Analysis, http://practicalmalwareanalysis.com/fakenet/

[26] Bulk Extractor, http://www.forensicswiki.org/wiki/Bulk_extractor

[27] Wireshark, Wireshark, http://www.wireshark.org/